Linus Nwankwo*,

Björn Ellensohn, Christian Rauch, Elmar Rueckert

Chair of Cyber-Physical Systems, Technical University of Leoben, Austria

State-of-the-art language-conditioned HRI frameworks treat communication as a unidirectional process. SIL fundamentally changes this dynamic.

Unidirectional, reactive, no learning

Bidirectional, proactive, evolving

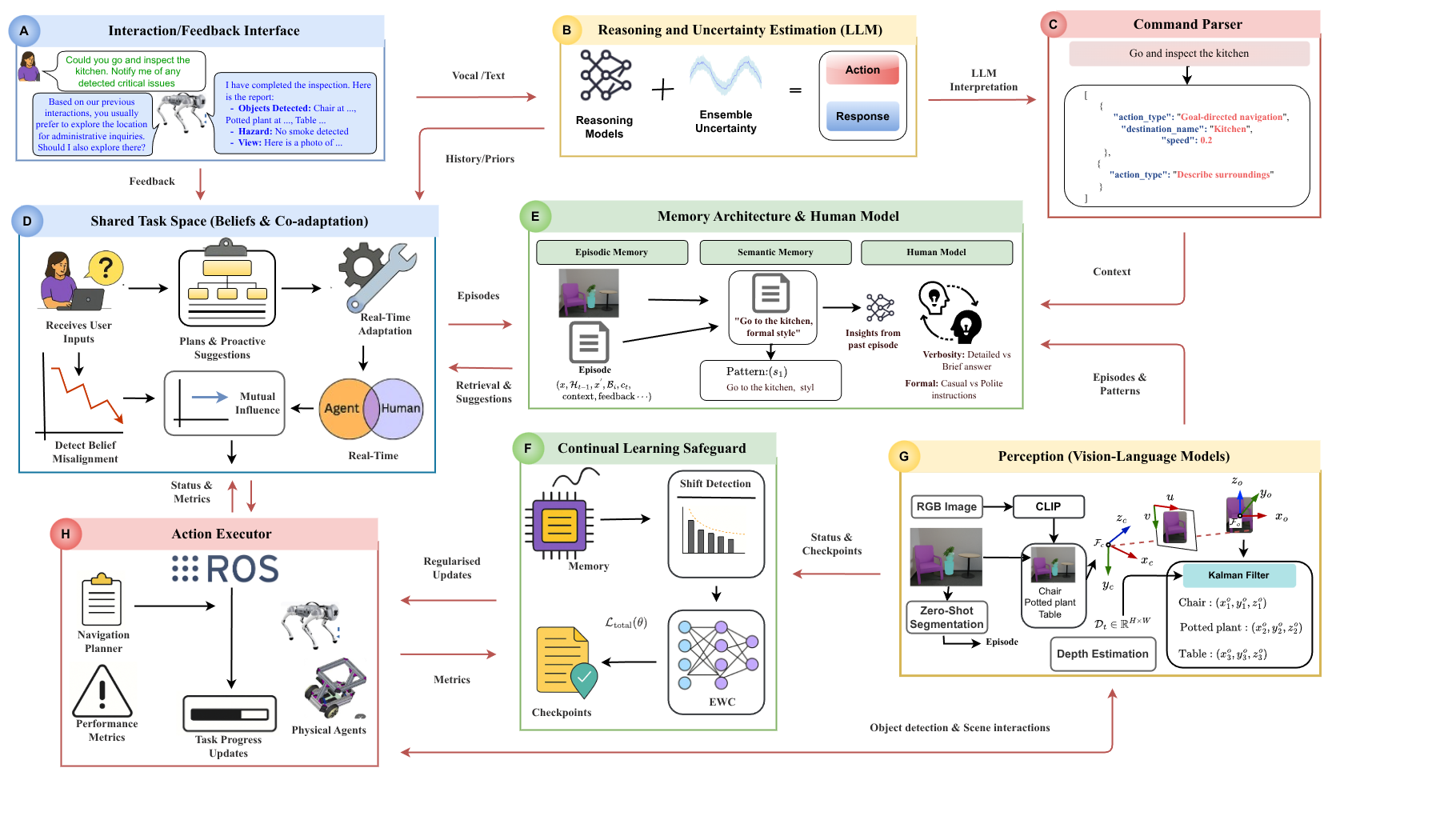

SIL introduces several novel components that together enable co-adaptive human-robot interaction.

We identify and formalise the unidirectional learning problem in language-conditioned HRI, where the agent maintains a static belief BAstatic with ∂θ/∂t = 0, imposing the entire alignment burden on the human.

We modelled co-adaptation as belief-state evolution. Both human and agent maintain structured belief states BH and BA that co-evolve within Z ⊆ ℝd, each modulated by the other's latent embedding via learned influence vectors.

We employed pre-trained FMs (SAM for zero-shot segmentation, CLIP for vision-language alignment) for spatial perception, paired with a lightweight latent encoder ϕ : ℝ768 → Z. GPT-4o provides ensemble-based reasoning and uncertainty quantification.

SIL employs dual-component memory (episodic buffer + semantic consolidation) augmented with Elastic Weight Consolidation (EWC). EWC estimates parameter importance via Fisher information F(k) to prevent catastrophic forgetting (λ = 1000) of learned tasks representations.

Simulation Demo

SIL agent in Gazebo with Unitree Go1 quadruped

Real-World Demo

SIL agent on physical robot

Click each stage to see how data flows through the system. Labels correspond to the model architecture shown below.

Belief states BH and BA co-evolve within Z ⊆ ℝd (d=256). Bidirectional influence via learned weight matrices WHA, WAH. Alignment measured by ρt; clarification triggered when ρ < τmis=0.6.

SAM + CLIP for zero-shot segmentation and open-vocabulary recognition with dual-fidelity filtering. GPT-4o ensemble (K temperatures) for reasoning. Lightweight encoder ϕ : ℝ768 → Z bridges perception to task space.

Episodic memory for interaction-specific traces (2000 episodes, 60 days). Semantic memory consolidates patterns. Belief-aware retrieval balances semantic similarity (ws=0.6) and belief alignment (wb=0.4).

Elastic Weight Consolidation estimates parameter importance via Fisher information F(k). Task-shift detection via performance windows (10/20 episodes). Importance coefficient λ=1000 balances plasticity and stability.

We conducted a total of 350 interaction episodes distributed across the five task domains below: EIF (n = 120), MIIR (n = 60), QOR (n = 80), PDS (n = 40), and LPL (n = 50). Each experiment was repeated over 5 independent runs to account for variability in LLM sampling and encoder initialisation.

Toggle ablation variants to compare against full SIL. Bars show averaged TCR (%) per domain.

Yellow paths show agent trajectories. Use arrow keys or click to navigate.

@article{nwankwo2025beyond,

title={SIL: Symbiotic Interactive Learning for Language-Conditioned Human-Agent Co-Adaptation},

author={Nwankwo, Linus and Ellensohn, Bj{\"o}rn and

Rauch, Christian and Rueckert, Elmar},

journal={arXiv preprint arXiv:2511.05203},

year={2025}

}